Getting back to basics

This is week ten of the COVID-19 Data Dispatch. I'm reflecting on why I started this project, and asking for your help in determining its future.

Welcome back to the COVID-19 Data Dispatch.

This week’s issue is a little unusual. My girlfriend and I went to Maine for a brief vacation, and I spent some time reflecting on this newsletter. In this issue, I’m sharing those reflections and my thoughts for where this project may go next. I’m also asking you to help shape the project’s next few months.

As always, shares are appreciated. If you were forwarded this email, you can subscribe here:

Reflecting and looking forward

Candid of me reading Hank Green’s new book (very good), beneath some fall foliage. It sure is great to go outside!

I like to answer questions. I’m pretty good at explaining complicated topics, and when I don’t know the answer to something, I can help someone find it. These days, that tendency manifests in everyday conversations, whether it’s with my friend from high school or a Brooklyn dad whose campsite shares a firepit with my Airbnb. I make sure the person I’m talking to knows that I’m a science journalist, and I invite them to ask me their COVID-19 questions. I do my best to be clear about where I have expertise and where I don’t, and I try to point them to sources that will fill in my gaps.

I want this newsletter to feel like one of those conversations. I started it when hospitalization data switched from the auspices of the Centers for Disease Control and Prevention (CDC) to the Department of Health and Human Services (HHS), and I realized how intensely political agendas were twisting public understanding of data in this pandemic. I wanted to answer my friends’ and family members’ questions, and I wanted to do it in a way that could also become a resource for other journalists.

This is the newsletter’s tenth week. As I took a couple of days off to unplug, it seemed a fitting time to reflect on the project’s goals and on how I’d like to move forward.

What should data reporting look like in a pandemic?

This is a question I got over the weekend. How, exactly, have the CDC and the HHS failed in their data reporting since the novel coronavirus hit America back in January?

The most important quality for a data source is transparency. Any figure will only be a one-dimensional reflection of reality; it’s impossible for figures to be fully accurate. But it is possible for sources to make public all of the decisions leading to those figures. Where did you get the data? Whom did you survey? Whom didn’t you survey? What program did you use to compile the data, to clean it, to analyze it? How did you decide which numbers to make public? What equations did you use to arrive at your averages, your trendlines, your predictions? And so on and so forth. Reliable data sources make information public, they make representatives of the analysis team available for questions, and they make announcements when a mistake has been identified.

Transparency is especially important for COVID-19 data, as infection numbers drive everything from which states’ residents are required to quarantine for two weeks when they travel, to how many ICU beds at a local hospital must be ready for patients. Journalists like me need to know what data the government is using to make decisions and where those numbers are coming from so that we can hold the government accountable; but beyond that, readers like you need to know exactly what is happening in your communities and how you can mitigate your own personal risk levels.

In my ideal data reporting scenario, representatives from the CDC or another HHS agency would be extremely public about all the COVID-19 data they’re collecting. It would publish these data in a public portal, yes, but this would be the bare minimum. This agency would publish a detailed methodology explaining how data are collected from labs, hospitals, and other clinical sites, and it would publish a detailed data dictionary written in easily accessible language.

And, most importantly, the agency would hold regular public briefings. I’m envisioning something like Governor Cuomo’s PowerPoints, but led by the actual public health experts, and with substantial time for Q&A. Agency staff should also be available to answer questions from the public and direct them to resources, such as the CDC’s pages on childcare during COVID-19 or their local registry of test sites. Finally, it should go without saying that, in my ideal scenario, every state and local government would follow the same definitions and methodology for reporting data.

Why am I doing this newsletter?

The CDC now publishes a national dataset of COVID-19 cases and deaths, and the HHS publishes a national dataset of PCR tests. Did you know about them? Have you seen any public briefings led by health experts about these data? Even as I wrote up this description, I realized how deeply our federal government has failed at even the basics of data transparency.

Neither the CDC nor HHS even published any testing data until May. Meanwhile, state and local public health agencies are largely left to their own devices, with some common definitions but few widely enforced standards. Florida publishes massive PDF reports, which fail to include the details of their calculations. Texas dropped a significant number of tests in August without clear explanation. Many states fail to report antigen test counts, leaving us with a black hole in national testing data.

Research efforts and volunteer projects, such as Johns Hopkins’ COVID-19 Tracker and the COVID Tracking Project, have stepped in to fill the gap left by federal public health agencies. The COVID Tracking Project, for example, puts out daily tweets and weekly blog posts reporting on the state of COVID-19 in the U.S. I’m proud to be a small part of this vital communication effort, but I have to acknowledge that the Project does a tiny fraction of the work that an agency like the CDC would be able to mount.

Personally, I feel a responsibility to learn everything I can about COVID-19 data, and share it with an audience that can help hold me accountable to my work. So, there it is: this newsletter exists to fill a communication gap. I want to tell you what state and federal agencies are doing—or aren’t doing—to provide data on how COVID-19 is impacting Americans. And I want to help you attain some data literacy along the way. I don’t have fancy PowerPoints like Cuomo or fancy graphics like the COVID Tracking Project (though my Tableau skills are improving!). But I can ask questions, and I can answer them. I hope you’re reading this because you find that useful, and I hope this project can become more useful as it grows.

What’s next?

America is moving into what may be a long winter, with schools open and the seasonal flu incoming. (If you haven’t yet, this is your reminder: get your flu shot!) I’m in no position to hypothesize about second waves or vaccine deployment, but I do believe this pandemic will not go away any time soon.

With that in mind, I’d like to settle in this newsletter for the long haul. And I can’t do it alone. In the coming months, I want this project to become more reader-focused. Here are a couple of ideas I have about how to make that happen; please reach out if you have others!

Reader-driven topics: Thus far, the subjects of this newsletter have been driven by whatever I am excited and/or angry about in a given week. I would like to broaden this to also include news items, data sources, and other topics that come from you.

Answering your questions: Is there a COVID-19 metric that you’ve seen in news articles, but aren’t sure you understand? Is there a data collection process that you’d like to know more about? Is there a seemingly-simple thing about the virus that you’ve been afraid to ask anywhere else? Send me your COVID-19 questions, data or otherwise, and I will do my best to answer.

Collecting data sources: In the first nine weeks of this project, I’ve featured a lot of data sources, and the number will only grow as I continue. It might be helpful if I put all those sources together into one public spreadsheet to make a master resource, huh? (I am a little embarrassed that I didn’t think of this one sooner.) I’ll work on this spreadsheet, and share it with you all next week.

Events?? One of my goals with this project is data literacy, and I’d like to make that work a little more hands-on. I’m thinking about potential online workshops and collaborations with other organizations. I’m also looking into potential funding options for such events; there will hopefully be more news to come on this front in the coming weeks.

In order to gather some of your ideas without making you email/comment/Tweet at me (though these methods are always welcome), I threw together a quick Google form. It should only take a few minutes to fill out, unless you get really crazy with the short answer questions.

COVID source callout

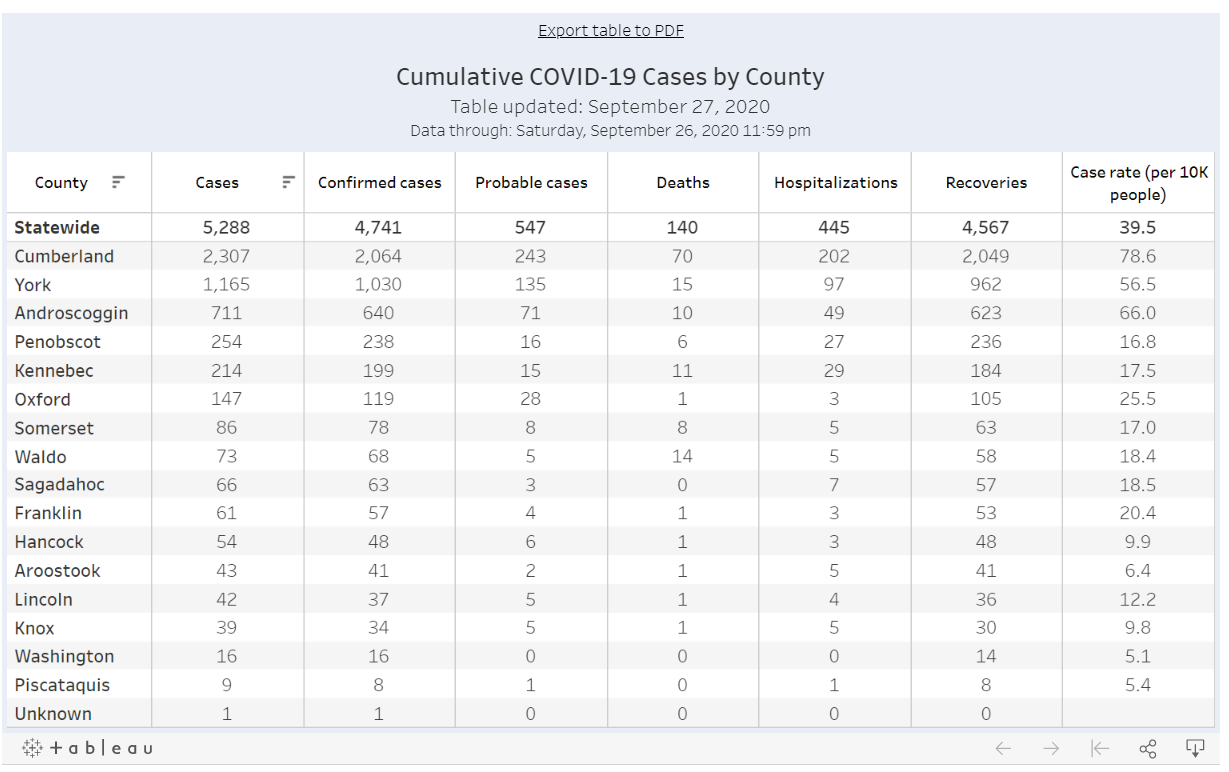

I visited Maine this week, so it seems fitting to evaluate the state’s COVID-19 dashboard on my way home.

Look at how clean this is!

Maine was actually one of my favorite state dashboards for a while. Everything is on one page. A summary section at the top makes it easy to see all the most important numbers, and then there’s a tabbed panel with mini-pages on trends and demographic data. It’s all fairly easy to navigate, and although there was a period of a few weeks where Maine’s demographic data tab never loaded for me, I never held that against the state. Maine has a clear data timestamp, and it was also one of the first states to properly separate out PCR and antibody testing numbers.

Now, however, Maine is lumping PCR and antigen tests. This means that counts of these two test are being combined in a single figure. Both PCR and antigen tests are diagnostic, but they have differing sensitivities and serve different purposes, and should be reported separately; to combine them may lead to inaccurate test positivity calculations and other issues. I expect this type of misleading reporting from, say, Florida or Rhode Island, but not from Maine. Be better, Maine!

(P.S. COVID Tracking Project blog post on antigen testing coming soon. It’ll be worth the hype, I promise.)

More recommended reading

My recent Stacker bylines

News from the COVID Tracking Project

Why The COVID Tracking Project’s Death Count Hasn’t Hit 200,000

Trends Improve Except in the Midwest: This Week in COVID-19 Data, Sep 24

Bonus

New, Secretive Data System Shaping Federal Pandemic Response (The Center for Public Integrity)

Covid-19 Vaccines Could End Up With Bias Built Right In (WIRED)

That’s all for today! I’ll be back next week with more data news.

If you’d like to share this newsletter further, you can do so here:

If you have any feedback for me—or if you want to ask me your COVID-19 data questions—you can send me an email (betsyladyzhets@gmail.com) or comment on this post directly:

This newsletter is a labor of love for me each week. If you appreciate the news and resources, I’d appreciate a tip:

And here’s that survey link again, in case you missed it: